Function calling lets an LLM act as a bridge between natural-language prompts and real-world code or APIs. Instead of simply generating text, the model decides when to invoke a predefined function, emits a structured JSON call with the function name and arguments, and then waits for your application to execute that call and return the results. This back-and-forth can loop, potentially invoking multiple functions in sequence, enabling rich, multi-step interactions entirely under conversational control. In this tutorial, we’ll implement a weather assistant with Gemini 2.0 Flash to demonstrate how to set up and manage that function-calling cycle. We will implement different variants of Function Calling. By integrating function calls, we transform a chat interface into a dynamic tool for real-time tasks, whether fetching live weather data, checking order statuses, scheduling appointments, or updating databases. Users no longer fill out complex forms or navigate multiple screens; they simply describe what they need, and the LLM orchestrates the underlying actions seamlessly. This natural language automation enables the easy construction of AI agents that can access external data sources, perform transactions, or trigger workflows, all within a single conversation.

Function Calling with Google Gemini 2.0 Flash

We install the Gemini Python SDK (google-genai ≥ 1.0.0), along with geopy for converting location names to coordinates and requests for making HTTP calls, ensuring all the core dependencies for our Colab weather assistant are in place.

We import the Gemini SDK, set your API key, and create a genai.Client instance configured to use the “gemini-2.0-flash” model, establishing the foundation for all subsequent function-calling requests.

model=model_id,

contents=[“Tell me 1 good fact about Nuremberg.”]

)

print(res.text)

We send a user prompt (“Tell me 1 good fact about Nuremberg.”) to the Gemini 2.0 Flash model via generate_content, then print out the model’s text reply, demonstrating a basic, end-to-end text‐generation call using the SDK.

Function Calling with JSON Schema

“name”: “get_weather_forecast”,

“description”: “Retrieves the weather using Open-Meteo API for a given location (city) and a date (yyyy-mm-dd). Returns a list dictionary with the time and temperature for each hour.”,

“parameters”: {

“type”: “object”,

“properties”: {

“location”: {

“type”: “string”,

“description”: “The city and state, e.g., San Francisco, CA”

},

“date”: {

“type”: “string”,

“description”: “the forecasting date for when to get the weather format (yyyy-mm-dd)”

}

},

“required”: [“location”,”date”]

}

}

Here, we define a JSON Schema for our get_weather_forecast tool, specifying its name, a descriptive prompt to guide Gemini on when to use it, and the exact input parameters (location and date) with their types, descriptions, and required fields, so the model can emit valid function calls.

config = GenerateContentConfig(

system_instruction=”You are a helpful assistant that use tools to access and retrieve information from a weather API. Today is 2025-03-04.”,

tools=[{“function_declarations”: [weather_function]}],

)

We create a GenerateContentConfig that tells Gemini it’s acting as a weather‐retrieval assistant and registers your weather function under tools. Hence, the model knows how to generate structured calls when asked for forecast data.

model=model_id,

contents=”Whats the weather in Berlin today?”

)

print(response.text)

This call sends the bare prompt (“What’s the weather in Berlin today?”) without including your config (and thus no function definitions), so Gemini falls back to plain text completion, offering generic advice instead of invoking your weather‐forecast tool.

model=model_id,

config=config,

contents=”Whats the weather in Berlin today?”

)

for part in response.candidates[0].content.parts:

print(part.function_call)

By passing in config (which includes your JSON‐schema tool), Gemini recognizes it should call get_weather_forecast rather than reply in plain text. The loop over response.candidates[0].content.parts then prints out each part’s .function_call object, showing you exactly which function the model decided to invoke (with its name and arguments).

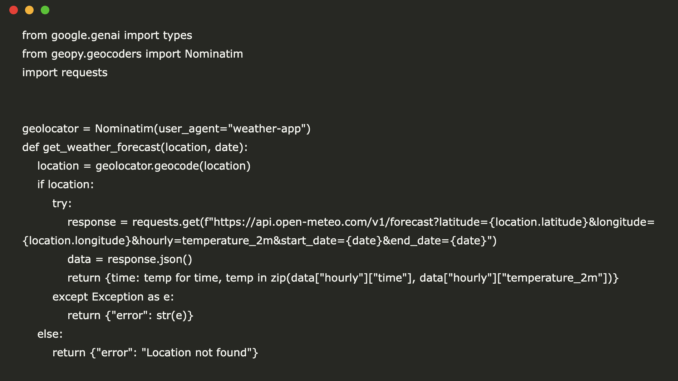

from geopy.geocoders import Nominatim

import requests

geolocator = Nominatim(user_agent=”weather-app”)

def get_weather_forecast(location, date):

location = geolocator.geocode(location)

if location:

try:

response = requests.get(f”https://api.open-meteo.com/v1/forecast?latitude={location.latitude}&longitude={location.longitude}&hourly=temperature_2m&start_date={date}&end_date={date}”)

data = response.json()

return {time: temp for time, temp in zip(data[“hourly”][“time”], data[“hourly”][“temperature_2m”])}

except Exception as e:

return {“error”: str(e)}

else:

return {“error”: “Location not found”}

functions = {

“get_weather_forecast”: get_weather_forecast

}

def call_function(function_name, **kwargs):

return functions[function_name](**kwargs)

def function_call_loop(prompt):

contents = [types.Content(role=”user”, parts=[types.Part(text=prompt)])]

response = client.models.generate_content(

model=model_id,

config=config,

contents=contents

)

for part in response.candidates[0].content.parts:

contents.append(types.Content(role=”model”, parts=[part]))

if part.function_call:

print(“Tool call detected”)

function_call = part.function_call

print(f”Calling tool: {function_call.name} with args: {function_call.args}”)

tool_result = call_function(function_call.name, **function_call.args)

function_response_part = types.Part.from_function_response(

name=function_call.name,

response={“result”: tool_result},

)

contents.append(types.Content(role=”user”, parts=[function_response_part]))

print(f”Calling LLM with tool results”)

func_gen_response = client.models.generate_content(

model=model_id, config=config, contents=contents

)

contents.append(types.Content(role=”model”, parts=[func_gen_response]))

return contents[-1].parts[0].text.strip()

result = function_call_loop(“Whats the weather in Berlin today?”)

print(result)

We implement a full “agentic” loop: it sends your prompt to Gemini, inspects the response for a function call, executes get_weather_forecast (using Geopy plus an Open-Meteo HTTP request), and then feeds the tool’s result back into the model to produce and return the final conversational reply.

Function Calling using Python functions

import requests

geolocator = Nominatim(user_agent=”weather-app”)

def get_weather_forecast(location: str, date: str) -> str:

“””

Retrieves the weather using Open-Meteo API for a given location (city) and a date (yyyy-mm-dd). Returns a list dictionary with the time and temperature for each hour.”

Args:

location (str): The city and state, e.g., San Francisco, CA

date (str): The forecasting date for when to get the weather format (yyyy-mm-dd)

Returns:

Dict[str, float]: A dictionary with the time as key and the temperature as value

“””

location = geolocator.geocode(location)

if location:

try:

response = requests.get(f”https://api.open-meteo.com/v1/forecast?latitude={location.latitude}&longitude={location.longitude}&hourly=temperature_2m&start_date={date}&end_date={date}”)

data = response.json()

return {time: temp for time, temp in zip(data[“hourly”][“time”], data[“hourly”][“temperature_2m”])}

except Exception as e:

return {“error”: str(e)}

else:

return {“error”: “Location not found”}

The get_weather_forecast function first uses Geopy’s Nominatim to convert a city-and-state string into coordinates, then sends an HTTP request to the Open-Meteo API to retrieve hourly temperature data for the given date, returning a dictionary that maps each timestamp to its corresponding temperature. It also handles errors gracefully, returning an error message if the location isn’t found or the API call fails.

config = GenerateContentConfig(

system_instruction=”You are a helpful assistant that can help with weather related questions. Today is 2025-03-04.”, # to give the LLM context on the current date.

tools=[get_weather_forecast],

automatic_function_calling={“disable”: True}

)

This config registers your Python get_weather_forecast function as a callable tool. It sets a clear system prompt (including the date) for context, while disabling “automatic_function_calling” so that Gemini will emit the function call payload instead of invoking it internally.

model=model_id,

config=config,

contents=”Whats the weather in Berlin today?”

)

for part in r.candidates[0].content.parts:

print(part.function_call)

By sending the prompt with your custom config (including the Python tool but with automatic calls disabled), this snippet captures Gemini’s raw function‐call decision. Then it loops over each response part to print out the .function_call object, letting you inspect exactly which tool the model wants to invoke and with what arguments.

config = GenerateContentConfig(

system_instruction=”You are a helpful assistant that use tools to access and retrieve information from a weather API. Today is 2025-03-04.”, # to give the LLM context on the current date.

tools=[get_weather_forecast],

)

r = client.models.generate_content(

model=model_id,

config=config,

contents=”Whats the weather in Berlin today?”

)

print(r.text)

With this config (which includes your get_weather_forecast function and leaves automatic calling enabled by default), calling generate_content will have Gemini invoke your weather tool behind the scenes and then return a natural‐language reply. Printing r.text outputs that final response, including the actual temperature forecast for Berlin on the specified date.

config = GenerateContentConfig(

system_instruction=”You are a helpful assistant that use tools to access and retrieve information from a weather API.”,

tools=[get_weather_forecast],

)

prompt = f”””

Today is 2025-03-04. You are chatting with Andrew, you have access to more information about him.

User Context:

– name: Andrew

– location: Nuremberg

User: Can i wear a T-shirt later today?”””

r = client.models.generate_content(

model=model_id,

config=config,

contents=prompt

)

print(r.text)

We extend your assistant with personal context, telling Gemini Andrew’s name and location (Nuremberg) and asking if it’s T-shirt weather, while still using the get_weather_forecast tool under the hood. It then prints the model’s natural-language recommendation based on the actual forecast for that day.

In conclusion, we now know how to define functions (via JSON schema or Python signatures), configure Gemini 2.0 Flash to detect and emit function calls, and implement the “agentic” loop that executes those calls and composes the final response. With these building blocks, we can extend any LLM into a capable, tool-enabled assistant that automates workflows, retrieves live data, and interacts with your code or APIs as effortlessly as chatting with a colleague.

Here is the Colab Notebook. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

🔥 [Register Now] miniCON Virtual Conference on AGENTIC AI: FREE REGISTRATION + Certificate of Attendance + 4 Hour Short Event (May 21, 9 am- 1 pm PST) + Hands on Workshop

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. His most recent endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is both technically sound and easily understandable by a wide audience. The platform boasts of over 2 million monthly views, illustrating its popularity among audiences.

Be the first to comment